Wayne Cichanski, Vice President of Search and Site Experience at iQuanti, examines LLM mechanics and optimization.

The current challenge for buyers is not a lack of information but a lack of brand presence within AI-driven results. As large language models reshape how search works, visibility is no longer defined by rankings alone; it is determined by whether a brand is selected as a source within the final answer. As per reports, AI-generated summaries such as Google’s AI Overviews now appear in about 18% of all searches, meaning nearly one in five queries is answered directly without users needing to click through.

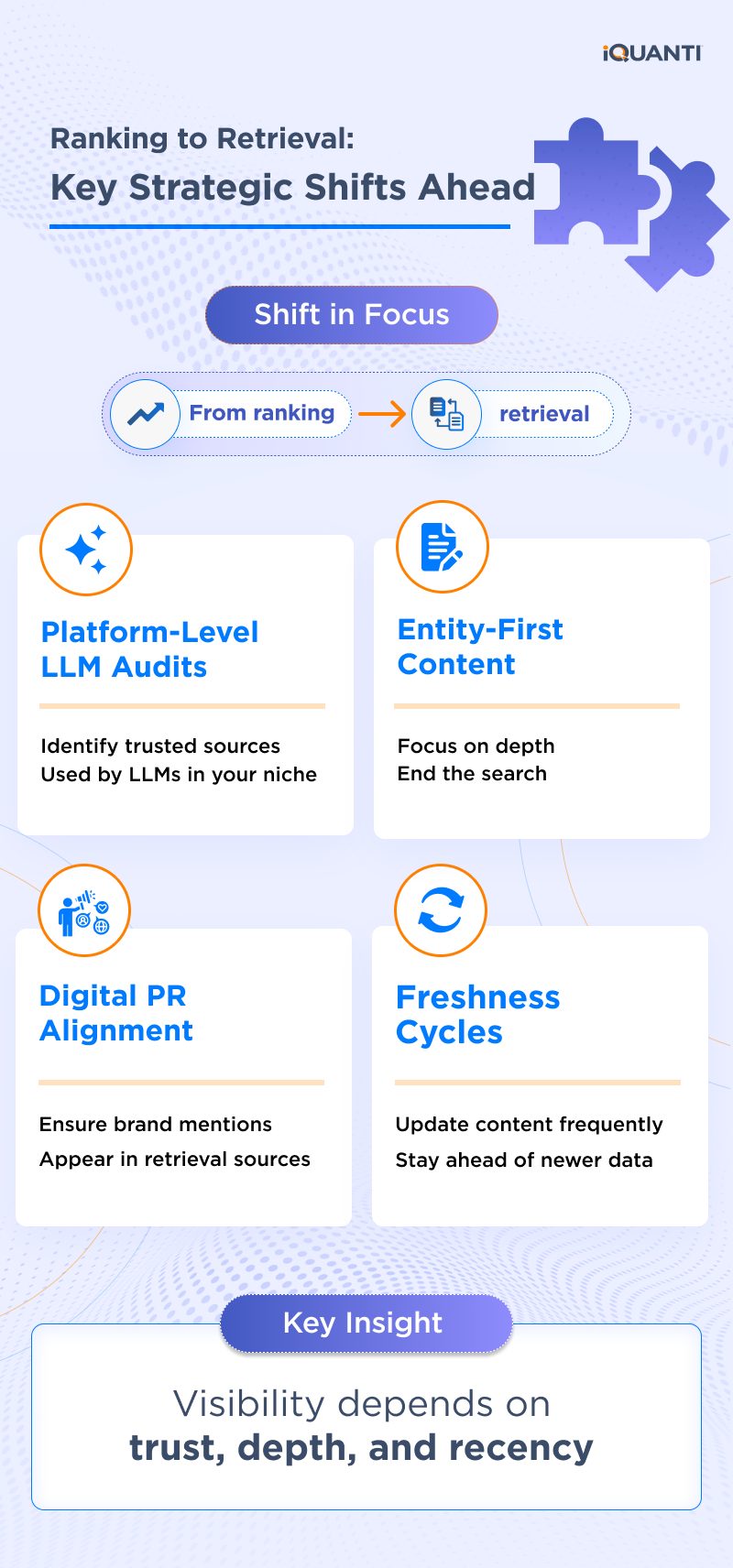

The modern search landscape has shifted from a simple competition for page rankings toward a rigorous selection process. With the rise of multi-agent infrastructure where AI tools delegate tasks to one another, the ultimate objective for a brand is no longer mere visibility. Instead, success is defined by inclusion within the synthesized answer itself. To remain competitive, marketers must master the four-stage engine that drives AI responses: Query Understanding, Retrieval, Filtering and Synthesis.

The AI Search Model: Anticipation Over Retrieval

The traditional search model is reactive: a user enters a query, Google provides a list of results, and the user manually filters through them. AI search, however, is predictive and conversational. It uses intent expansion to layer context, such as a user’s goals, audience profile, and situation, to anticipate the next step in a journey.

When a user asks how to improve a credit score, Google search used to provide links. But now, AI Overviews, or ChatGPT, conversely, provide a seven-step guide, explain the variables affecting the score, and offer a proactive 30-day action plan. The LLM is not just matching keywords; it is attempting to fully resolve the problem space by answering the implied questions the user has not even asked yet.

From Topical Authority to Entity Authority

For years, SEOs have focused on Topical Authority, which is proving expertise on a specific subject through content clusters. In the age of LLMs, the focus must shift to the Entity Authority. While topical authority is about what you know, entity authority is about who you are in the digital ecosystem.

Entity authority is the overall reputation and digital PR footprint of your domain. It is built through brand recognition, historical presence, and consistent mentions across the web. While a high Domain Authority (DA) might get you into the room, it is the trust and consistency of your entity across diverse platforms that determines if the LLM gives you the chance to speak by citing you as a source.

Coverage Depth

Coverage depth is a critical factor in how LLMs evaluate and select content. Unlike traditional SEO, where matching a keyword and providing a basic answer could be sufficient, LLMs prioritize content that fully resolves the user’s problem. This means going beyond the primary question to include supporting concepts, related explanations, and potential edge cases. A strong page does not just define a topic but also explains how it works, compares related ideas, and anticipates follow-up questions the user may have. The goal is to eliminate the need for further searching by delivering a complete, self-contained answer. Content that only scratches the surface or addresses a single aspect of the query is less likely to be selected. In contrast, content with comprehensive coverage signals higher relevance, increasing its chances of being included in AI-generated responses.

The Winner-Take-All Filtering Process

Earlier, there were ten blue links where even positions three or four can garner significant traffic. In AI search, the filtering process is far more brutal. While an LLM may index thousands of pages during the retrieval phase, the synthesis phase typically filters these down to a shortlist of candidates.

Eliminating the Need for Further Search

The goal for an LLM is to provide an answer so comprehensively that the user has no reason to continue searching. This is a radical departure from the traditional SEO mindset. If your page is keyword-focused with low coverage, the likelihood of being cited is minimal. If it partially answers the query, the likelihood is medium.

To achieve a high likelihood of selection, you must:

- Resolve the Problem Space: Fully explain calculations, compare similar terms, and discuss effects.

- Anticipate Follow-ups: Address what is next question before the user asks it.

- Provide Context: Layer in audience-specific information that makes the data actionable.

The Power of Unlinked Brand Mentions

In the Google-dominated era, the backlink was the undisputed king of trust. LLMs, however, evaluate co-citations and unlinked brand mentions with nearly equal weight. They look for a popularity contest across trusted sources like Wikipedia, Investopedia, or industry-specific journals.

If your brand is consistently mentioned by other trusted entities when a specific topic like APR calculations is discussed, the LLM views you as a recognizable and historical authority. This digital PR footprint is often undervalued but acts as a critical signal during the LLM’s selection process.

The evolution of search is moving from finding a website to finding an answer. Organizations that successfully transition from managing links to managing their entity’s authority will own the discovery journey in the era of AI.

This article is based on insights from our webinar, “How LLMs Choose Sources & Answer Elements: Diving Deeper into AI Search.” Watch the full webinar to explore the complete discussion and key strategies. To explore how financial brands can win with a holistic search framework in the AI era, get in touch.